Algorithmic black box as modern extension of Hume’s problem

Image generated by AI

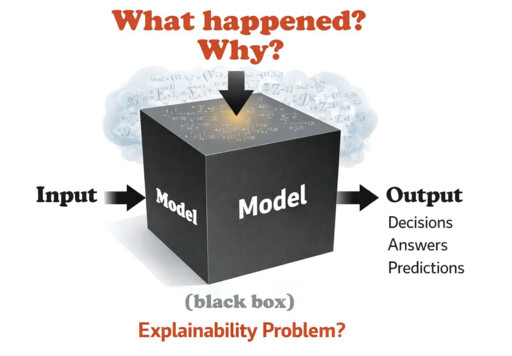

The operation and decision-making mechanisms of large language models (LLMs) are opaque to external users, who can observe only inputs and outputs, with little insight into how results are generated from massive datasets. This “comprehension gap” inevitably raises doubts about the reliability of LLM-generated outputs: if we do not know how an output is produced, on what grounds can we judge whether it is trustworthy? This is the essence of the so-called “algorithmic black box” problem.

The conventional perspective maintains that a system’s output is considered reliable only when its underlying processes and decision-making methods can be clearly explained through human language or formal reasoning. However, the successful application of large language models such as ChatGPT and DeepSeek demonstrates that certain systems can still produce highly reasonable outputs and gain widespread acceptance even if they cannot be fully understood.

This forces us to reflect: How should we understand LLMs? On what basis can we reasonably accept their outputs? Clarifying these issues is indispensable for effective artificial intelligence (AI) governance. Therefore, among the many philosophical issues of AI, the algorithmic black box problem occupies a central position. It concerns not only technical transparency and interpretability but also deeper issues such as the human-machine trust mechanism.

Isomorphic structures

Can reliable scientific knowledge be derived from limited empirical experience? This is the famous problem exposed by David Hume, who argued that inductive reasoning cannot logically justify the inference from “the past has been so” to “the future will be so.” Such inference presupposes the principle of the uniformity of nature—the assumption that the future will resemble the past—but this principle itself cannot be demonstrated. From this, Hume concluded that scientific knowledge derived from induction cannot claim logical necessity or demonstrative certainty on the basis of finite experience.

LLMs exhibit pronounced inductive characteristics in both training and generation. During training, they statistically analyze correlations—often conceptualized as “distances”—between tokens in massive corpora. During generation, they predict the next token on the basis of these learned correlations and select among candidate tokens through strategies such as greedy search, random sampling, or beam search, thereby producing sentences, paragraphs, and extended texts. In essence, this process amounts to identifying contextual regularities: which tokens are likely to appear within a given context. Yet these regularities are inferred from existing data distributions and cannot guarantee applicability to novel situations.

In this respect, the algorithmic black box mirrors classical skepticism about induction. Our trust in algorithmic outputs, like our belief that the sun will rise tomorrow, lacks logical necessity; it rests on empirical expectation. The two problems are therefore structurally isomorphic. Empirical research in 2024 by McMillan-Scott and Mosoleci showed that all LLMs produce incorrect answers; even GPT-4, then considered state-of-the-art, achieved an accuracy rate of only 69.2%. Should we therefore abandon generative AI built on LLMs? The answer is no. Inductive reasoning has long faced Hume’s challenge, yet it remains indispensable for navigating uncertainty and acquiring knowledge. In addressing algorithmic black boxes, we may similarly draw on philosophical responses to Hume’s problem.

Inductive acceptance and cognitive decision-making

Although Hume’s problem appears to undermine the foundations of scientific knowledge, philosophers have not relinquished induction. One influential line of response is the theory of “inductive acceptance,” which proposes three core rules. First, the high-probability rule: if, given available evidence, the probability that a proposition is true exceeds 0.5, the subject has reason to accept it. Second, the deductive closure rule: the subject must accept the logical consequences of propositions already accepted. Third, the consistency rule: the set of accepted propositions must be logically coherent. These rules provide a potential framework for addressing algorithmic black box problems, offering a way to reasonably accept LLM outputs without fully “understanding” the internal mechanisms of the models.

However, the lottery paradox—formulated by Henry Kyburg in 1961—revealed a tension within this framework. If one simultaneously accepts all three rules, one may be committed to the mutually contradictory propositions that no lottery ticket will win and that some ticket must win. In response, American philosopher Isaac Levi argued that inductive acceptance should not be understood simply as the acceptance of high-probability propositions. Rather, it involves balancing two cognitive aims: the pursuit of truth (or error avoidance) and the resolution of suspense. The latter refers to the reduction of uncertainty about matters of concern.

Consider a lottery with one million tickets and a single winner. If the question at issue is “Which ticket will win?”, accepting the proposition “Ticket 1 will not win” has high probability but does little to reduce overall uncertainty. By contrast, if the question is “Will Ticket 1 win?”, accepting “Ticket 1 will not win” both has high probability and fully resolves the relevant uncertainty. Acceptance therefore depends not only on probability but also on the subject’s practical concerns. Levi introduced a “caution index” q to regulate the relative weight assigned to truth-seeking and suspense resolution. A higher q places greater emphasis on eliminating uncertainty; a lower q prioritizes error avoidance. By adjusting q, one can avoid both extremes: refusing all probabilistic claims in pursuit of abstract certainty (q = 0) or accepting contradictions in the name of decisiveness (q = 1).

Levi thus reconceived inductive acceptance as a form of cognitive decision-making. An inductive conclusion need not be certain, nor even highly probable in isolation; it must instead occupy an equilibrium point at which its expected cognitive utility exceeds that of competing propositions. Inductive knowledge, on this account, represents the optimal choice under conditions of limited evidence, guided by both cognitive and practical rationality. This provides a philosophical foundation for the rational legitimacy of inductive inference.

Toward governance framework

The algorithmic black box problem can be understood as a contemporary version of inductive acceptance. Accepting algorithmic outputs requires observing and verifying results on the basis of limited evidence, much as induction proceeds from finite cases. At the same time, algorithmic systems are inherently uncertain in their future performance. Demanding complete transparency is therefore as unrealistic as demanding absolute certainty from inductive knowledge.

Hume’s cognitive decision-making framework suggests that while inductive knowledge inherently carries probabilistic uncertainty, it can be responsibly managed through practical rationality and risk assessment. This insight offers a direction for addressing algorithmic black boxes: as inductive systems, algorithms extend the limits of human bounded rationality in understanding machine intelligence, and therefore require solutions grounded in practical rationality rather than demands for absolute certainty.

Early responses to algorithmic opacity generally fall into two categories. Technical approaches treat the black box as a purely technical problem, seeking greater transparency through explainable AI (XAI), visualization, or model simplification. Yet the nonlinear complexity of contemporary models means that even specialists struggle to achieve full comprehension, while ordinary users may experience “explanatory overload.” Normative approaches, by contrast, operate within a “rights-and-power” framework, emphasizing legal accountability and ethical constraints. These efforts often remain abstract if they fail to engage with technical feasibility.

Both approaches risk isolating technology from cognition and normativity, thereby leaving unresolved the fundamental tension between output reliability and incomprehensibility. From a cognitive standpoint, the difficulty in understanding partially opaque systems resembles reliance on medical diagnoses or complex scientific theories. Seen in this light, the black box appears less as a threat to the essence of knowledge than as a concrete manifestation of bounded rationality—a structural limitation in human cognition rather than a breakdown of epistemic principles.

A more promising path is to incorporate cognitive considerations into governance, constructing a framework of “bounded transparency and defensible decision-making.” Such a framework may rest on three pillars. First, a gray-box model of interpretation that abandons the illusion of total transparency and seeks a workable balance between complexity and partial comprehensibility. Second, a hierarchical explanatory mechanism that offers differentiated levels of explanation to end users, regulators, and developers, avoiding both redundancy and insufficiency. Third, the cultivation of algorithmic trust by distinguishing between the technical difficulty of explanation and the cognitive possibility of understanding, focusing on input–output relations and establishing “trust” in algorithms from a functional dimension.

The algorithmic black box is therefore not merely a technical defect, but a cognitive challenge analogous to earlier “technological black boxes” in human history. It does not overturn the core mechanisms of knowledge—verification, error correction, and rational decision-making. By integrating philosophical reflection on inductive rationality into technical design, emphasizing functional understanding of algorithms, and fostering interpretive and trust-building practices compatible with human cognition, we may learn to engage with generative AI critically and constructively. Such an approach offers a path toward a more balanced coexistence with intelligent technologies in the AI era.

Li Zhanglyu is a professor from Institute of Philosophy at the Chinese Academy of Social Sciences.

Editor:Yu Hui

Copyright©2023 CSSN All Rights Reserved